5 Years Later: OpenAJAX Who?

Five years ago the OpenAjax Alliance was founded with the intention of providing interoperability between what was quickly becoming a morass of AJAX-based libraries and APIs. Where is it today, and why has it failed to achieve more prominence? I stumbled recently over a nearly five year old article I wrote in 2006 for Network Computing on the OpenAjax initiative. Remember, AJAX and Web 2.0 were just coming of age then, and mentions of Web 2.0 or AJAX were much like that of “cloud” today. You couldn’t turn around without hearing someone promoting their solution by associating with Web 2.0 or AJAX. After reading the opening paragraph I remembered clearly writing the article and being skeptical, even then, of what impact such an alliance would have on the industry. Being a developer by trade I’m well aware of how impactful “standards” and “specifications” really are in the real world, but the problem – interoperability across a growing field of JavaScript libraries – seemed at the time real and imminent, so there was a need for someone to address it before it completely got out of hand. With the OpenAjax Alliance comes the possibility for a unified language, as well as a set of APIs, on which developers could easily implement dynamic Web applications. A unified toolkit would offer consistency in a market that has myriad Ajax-based technologies in play, providing the enterprise with a broader pool of developers able to offer long term support for applications and a stable base on which to build applications. As is the case with many fledgling technologies, one toolkit will become the standard—whether through a standards body or by de facto adoption—and Dojo is one of the favored entrants in the race to become that standard. -- AJAX-based Dojo Toolkit , Network Computing, Oct 2006 The goal was simple: interoperability. The way in which the alliance went about achieving that goal, however, may have something to do with its lackluster performance lo these past five years and its descent into obscurity. 5 YEAR ACCOMPLISHMENTS of the OPENAJAX ALLIANCE The OpenAjax Alliance members have not been idle. They have published several very complete and well-defined specifications including one “industry standard”: OpenAjax Metadata. OpenAjax Hub The OpenAjax Hub is a set of standard JavaScript functionality defined by the OpenAjax Alliance that addresses key interoperability and security issues that arise when multiple Ajax libraries and/or components are used within the same web page. (OpenAjax Hub 2.0 Specification) OpenAjax Metadata OpenAjax Metadata represents a set of industry-standard metadata defined by the OpenAjax Alliance that enhances interoperability across Ajax toolkits and Ajax products (OpenAjax Metadata 1.0 Specification) OpenAjax Metadata defines Ajax industry standards for an XML format that describes the JavaScript APIs and widgets found within Ajax toolkits. (OpenAjax Alliance Recent News) It is interesting to see the calling out of XML as the format of choice on the OpenAjax Metadata (OAM) specification given the recent rise to ascendancy of JSON as the preferred format for developers for APIs. Granted, when the alliance was formed XML was all the rage and it was believed it would be the dominant format for quite some time given the popularity of similar technological models such as SOA, but still – the reliance on XML while the plurality of developers race to JSON may provide some insight on why OpenAjax has received very little notice since its inception. Ignoring the XML factor (which undoubtedly is a fairly impactful one) there is still the matter of how the alliance chose to address run-time interoperability with OpenAjax Hub (OAH) – a hub. A publish-subscribe hub, to be more precise, in which OAH mediates for various toolkits on the same page. Don summed it up nicely during a discussion on the topic: it’s page-level integration. This is a very different approach to the problem than it first appeared the alliance would take. The article on the alliance and its intended purpose five years ago clearly indicate where I thought this was going – and where it should go: an industry standard model and/or set of APIs to which other toolkit developers would design and write such that the interface (the method calls) would be unified across all toolkits while the implementation would remain whatever the toolkit designers desired. I was clearly under the influence of SOA and its decouple everything premise. Come to think of it, I still am, because interoperability assumes such a model – always has, likely always will. Even in the network, at the IP layer, we have standardized interfaces with vendor implementation being decoupled and completely different at the code base. An Ethernet header is always in a specified format, and it is that standardized interface that makes the Net go over, under, around and through the various routers and switches and components that make up the Internets with alacrity. Routing problems today are caused by human error in configuration or failure – never incompatibility in form or function. Neither specification has really taken that direction. OAM – as previously noted – standardizes on XML and is primarily used to describe APIs and components - it isn’t an API or model itself. The Alliance wiki describes the specification: “The primary target consumers of OpenAjax Metadata 1.0 are software products, particularly Web page developer tools targeting Ajax developers.” Very few software products have implemented support for OAM. IBM, a key player in the Alliance, leverages the OpenAjax Hub for secure mashup development and also implements OAM in several of its products, including Rational Application Developer (RAD) and IBM Mashup Center. Eclipse also includes support for OAM, as does Adobe Dreamweaver CS4. The IDE working group has developed an open source set of tools based on OAM, but what appears to be missing is adoption of OAM by producers of favored toolkits such as jQuery, Prototype and MooTools. Doing so would certainly make development of AJAX-based applications within development environments much simpler and more consistent, but it does not appear to gaining widespread support or mindshare despite IBM’s efforts. The focus of the OpenAjax interoperability efforts appears to be on a hub / integration method of interoperability, one that is certainly not in line with reality. While certainly developers may at times combine JavaScript libraries to build the rich, interactive interfaces demanded by consumers of a Web 2.0 application, this is the exception and not the rule and the pub/sub basis of OpenAjax which implements a secondary event-driven framework seems overkill. Conflicts between libraries, performance issues with load-times dragged down by the inclusion of multiple files and simplicity tend to drive developers to a single library when possible (which is most of the time). It appears, simply, that the OpenAJAX Alliance – driven perhaps by active members for whom solutions providing integration and hub-based interoperability is typical (IBM, BEA (now Oracle), Microsoft and other enterprise heavyweights – has chosen a target in another field; one on which developers today are just not playing. It appears OpenAjax tried to bring an enterprise application integration (EAI) solution to a problem that didn’t – and likely won’t ever – exist. So it’s no surprise to discover that references to and activity from OpenAjax are nearly zero since 2009. Given the statistics showing the rise of JQuery – both as a percentage of site usage and developer usage – to the top of the JavaScript library heap, it appears that at least the prediction that “one toolkit will become the standard—whether through a standards body or by de facto adoption” was accurate. Of course, since that’s always the way it works in technology, it was kind of a sure bet, wasn’t it? WHY INFRASTRUCTURE SERVICE PROVIDERS and VENDORS CARE ABOUT DEVELOPER STANDARDS You might notice in the list of members of the OpenAJAX alliance several infrastructure vendors. Folks who produce application delivery controllers, switches and routers and security-focused solutions. This is not uncommon nor should it seem odd to the casual observer. All data flows, ultimately, through the network and thus, every component that might need to act in some way upon that data needs to be aware of and knowledgeable regarding the methods used by developers to perform such data exchanges. In the age of hyper-scalability and über security, it behooves infrastructure vendors – and increasingly cloud computing providers that offer infrastructure services – to be very aware of the methods and toolkits being used by developers to build applications. Applying security policies to JSON-encoded data, for example, requires very different techniques and skills than would be the case for XML-formatted data. AJAX-based applications, a.k.a. Web 2.0, requires different scalability patterns to achieve maximum performance and utilization of resources than is the case for traditional form-based, HTML applications. The type of content as well as the usage patterns for applications can dramatically impact the application delivery policies necessary to achieve operational and business objectives for that application. As developers standardize through selection and implementation of toolkits, vendors and providers can then begin to focus solutions specifically for those choices. Templates and policies geared toward optimizing and accelerating JQuery, for example, is possible and probable. Being able to provide pre-developed and tested security profiles specifically for JQuery, for example, reduces the time to deploy such applications in a production environment by eliminating the test and tweak cycle that occurs when applications are tossed over the wall to operations by developers. For example, the jQuery.ajax() documentation states: By default, Ajax requests are sent using the GET HTTP method. If the POST method is required, the method can be specified by setting a value for the type option. This option affects how the contents of the data option are sent to the server. POST data will always be transmitted to the server using UTF-8 charset, per the W3C XMLHTTPRequest standard. The data option can contain either a query string of the form key1=value1&key2=value2 , or a map of the form {key1: 'value1', key2: 'value2'} . If the latter form is used, the data is converted into a query string using jQuery.param() before it is sent. This processing can be circumvented by setting processData to false . The processing might be undesirable if you wish to send an XML object to the server; in this case, change the contentType option from application/x-www-form-urlencoded to a more appropriate MIME type. Web application firewalls that may be configured to detect exploitation of such data – attempts at SQL injection, for example – must be able to parse this data in order to make a determination regarding the legitimacy of the input. Similarly, application delivery controllers and load balancing services configured to perform application layer switching based on data values or submission URI will also need to be able to parse and act upon that data. That requires an understanding of how jQuery formats its data and what to expect, such that it can be parsed, interpreted and processed. By understanding jQuery – and other developer toolkits and standards used to exchange data – infrastructure service providers and vendors can more readily provide security and delivery policies tailored to those formats natively, which greatly reduces the impact of intermediate processing on performance while ensuring the secure, healthy delivery of applications. API Jabberwocky: You Say Tomay-to and I Say Potah-to OpenAjax Metadata 1.0 and the Adobe Dreamweaver Widget Browser OpenAjax Alliance AJAX-based Dojo Toolkit The Stealthy Ascendancy of JSON JSON Continues its Winning Streak Over XML JSON versus XML: Your Choice Matters More Than You Think I am in your HTTP headers, attacking your application The Web 2.0 API: From collaborating to compromised IT as a Service: A Stateless Infrastructure Architecture Model JSON Activity Streams and the Other Consumerization of IT351Views0likes0CommentsF5 Friday: Applications aren't protocols. They're Opportunities.

Applications are as integral to F5 technologies as they are to your business. An old adage holds that an individual can be judged by the company he keeps. If that holds true for organizations, then F5 would do well to be judged by the vast array of individual contributors, partners, and customers in its ecosystem. From its long history of partnering with companies like Microsoft, IBM, HP, Dell, VMware, Oracle, and SAP to its astounding community of over 160,000 engineers, administrators and developers speaks volumes about its commitment to and ability to develop joint and custom solutions. F5 is committed to delivering applications no matter where they might reside or what architecture they might be using. Because of its full proxy architecture, F5’s ADC platform is able to intercept, inspect and interact with applications at every layer of the network. That means tuning TCP stacks for mobile apps, protecting web applications from malicious code whether they’re talking JSON or XML, and optimizing delivery via HTTP (or HTTP 2.0 or SPDY) by understanding the myriad types of content that make up a web application: CSS, images, JavaScript and HTML. But being application-driven goes beyond delivery optimization and must cover the broad spectrum of technologies needed not only to deliver an app to a consumer or employee, but manage its availability, scale and security. Every application requires a supporting cast of services to meet a specific set of business and user expectations, such as logging, monitoring and failover. Over the 18 years in which F5 has been delivering applications it has developed technologies specifically geared to making sure these supporting services are driven by applications, imbuing each of them with the application awareness and intelligence necessary to efficiently scale, secure and keep them available. With the increasing adoption of hybrid cloud architectures and the need to operationally scale the data center, it is important to consider the depth and breadth to which ADC automation and orchestration support an application focus. Whether looking at APIs or management capabilities, an ADC should provide the means by which the services applications need can be holistically provisioned and managed from the perspective of the application, not the individual services. Technology that is application-driven, enabling app owners and administrators the ability to programmatically define provisioning and management of all the application services needed to deliver the application is critical moving forward to ensure success. F5 iApps and F5 BIG-IQ Cloud do just that, enabling app owners and operations to rapidly provision services that improve the security, availability and performance of the applications that are the future of the business. That programmability is important, especially as it relates to applications according to our recent survey (results forthcoming)in which a plurality of respondents indicated application templates are "somewhat or very important" to the provisioning of their applications along with other forms of programmability associated with software-defined architectures including cloud computing. Applications increasingly represent opportunity, whether it's to improve productivity or increase profit. Capabilities that improve the success rate of those applications are imperative and require a deeper understanding of an application and its unique delivery needs than a protocol and a port. F5 not only partners with application providers, it encapsulates the expertise and knowledge of how best to deliver those applications in its technologies and offers that same capability to each and every organization to tailor the delivery of their applications to meet and exceed security, reliability and performance goals. Because applications aren't just a set of protocols and ports, they're opportunities. And how you respond to opportunity is as important as opening the door in the first place.325Views0likes0CommentsIBM SDN VE and F5 SDAS: Provisioning at the Speed of Business

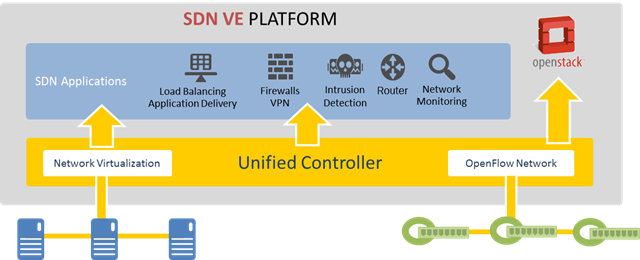

#IBMSDE #F5 #OpenDaylight #OpenStack #SDN As enterprises become familiar with SDN and its benefits, it should be no surprise that one of the most familiar names in enterprise data centers would offer up a solution designed to automate and speed the process of setting up and managing these new networks. That's ultimately the goal of software-defined technologies like SDN and F5 SDAS: to improve the velocity of service provisioning so that IT moves at the speed of business. “It takes about 5 days from an end-end point of view to provision something like that (a multi-tier system).” Goal is to “get at least to sub-one day.” -- John Manville, Cisco IT; The Power of a Programmable Cloud, OFC 2013 (OM2D.2) At the OpenDaylight summit, IBM announced its IBM Software Defined Network for Virtual Environments (SDN VE), designed to help organizations realize the goal of service provisioning at the speed of business. SDN VE is one of the first offerings built with open source and interfaces from the OpenDaylight project as well as support for OpenStack. SDN VE consists of the unified controller, virtual switches for creating overlays, gateways to non-SDN environments and open interfaces for application integration. IBM SDN VE provides a scalable network overlay that works over existing network infrastructure, using a standard frame format. Leveraging both network virtualization and OpenFlow-enabled physical infrastructure gives organizations flexibility in design and deployment. It should be no surprise, either, that as the leading provider of application delivery services (L4-7) and long time IBM business partner on the application delivery front, F5 has joined the IBM SDN Partner Ecosystem. Within the SDN VE framework, F5 SDAS will provide load balancing and application services via what is rapidly becoming the standard for real-time network integration, service chaining. IBM's approach aligns well with F5 Synthesis and its Software Defined Application Services (SDAS) with both focusing on improving the speed of service provisioning without sacrificing critical application and infrastructure services like availability, performance, security, mobility and access and identity control. F5 is pleased to continue to expand its partnership with IBM with SDN VE and SDAS.206Views0likes0CommentsDeploying BIG-IP with Tivoli Security Access Manager’s WebSeal Proxy

Availability, it always comes back to this doesn’t it? Sometimes a solution is so straightforward that I wonder if it deserves to be documented, but then I get a lot of phone calls and emails and my arm is twisted into publishing the solution. Not to downplay the significance of these solutions, because they mean a lot. If your authentication and entitlement system is not on-line, then neither are any of the applications protected by that system. I am pleased to be able to share the results of testing and implementation of the load balancing solution for Security Access Manager. You can find the guide on f5.com: http://www.f5.com/pdf/deployment-guides/ibm-security-access-manager-dg.pdf We are looking at three basic components with this guide: configuring your WebSeal servers to be identical, configuring the Virtual Server and its components and finally, adding acceleration features if your WebSeal proxy is also serving content. Pretty straightforward, but the benefits are measurable in terms of uptime, increased performance on the servers and better user experience from the point of view of the users. Let’s drill down into the details a bit, and then you can go try it for yourself via the deployment guide. The first component in this solution is to make sure the WebSeal servers 1 through N are configured to be identical. This ensures that if a user is load balanced to any of the WebSeal servers that he or she will be able to be authorized and entitled to the same root. In other words, this step makes sure that authorization evaluations are identical on all the hosts. Second, configuration of the BIG-IP is the fairly straightforward part of this solution. Nodes are created for each server, a pool is created for each member (node+port) and monitors, and profiles (TCP, HTTP and compression) are created and applied. One of the nicest features available in this solution comes in at this step. We recommend using step-down encryption methodology to maintain encryption while reducing the load on the WebSeal servers. By using 2k keys on the client side of the BIG-IP and 1k keys on the server side, the workload for the WebSeal server are more than halved. A big savings that reserves more CPU for the authorization and entitlement tasks at hand. Finally, I was inspired to add the acceleration features to this document by my colleagues in Japan who rolled this out for a customer. By attaching a Web Acceleration profile, using the new Application Acceleration Manager (AAM - http://www.f5.com/products/big-ip/big-ip-application-acceleration-manager/overview/ ) we are able to see significant gains in user experience and reduction in server CPU and usage. You may be familiar with AAM if you previously used Web Accelerator. I hope you enjoy the guide and provide your feedback..285Views0likes0CommentsVIVA LAS VEGAS!! IBM Impact here we come…

We are excited to have our first presence at the IBM impact conference beginning on Sunday. F5 will have a booth, and I will be speaking on the topic of high availability architecture, security and performance, on Monday April 29th. If you are going to be at the conference, definitely stop into session which will be in: Marcello room number 4405 from Noon to 12:45 pm. The conference itself is at the Venetian, of course. There are a few things that really excite me about this conference. First, the chance to meet all our WebSphere colleagues. We do so much work with WebSphere, and our joint customers have so much feedback for us about the solutions, that I’m really looking forward to having some deep-dive discussions with IBM Architects. Second, as always, I’m really looking forward to learning from the attendees. The IBM Pulse conference has always been a very technical conference and I believe Impact will be the same with the chance to get into architectural discussions about customer environments and possible solutions to problems. On the negative side, there’s the weather: 100 degrees with SIX percent humidity. This is is really tough for a Seattle person. I’ll be trying to drown myself with water and staying in air conditioned zones. You have to understand, when we get to 70 in Seattle, people start sheltering in place. But, alas, it’s a dry heat as they say, so let’s see how it works out. See you there!156Views0likes0CommentsF5 Application Security and IBM Guardium Security

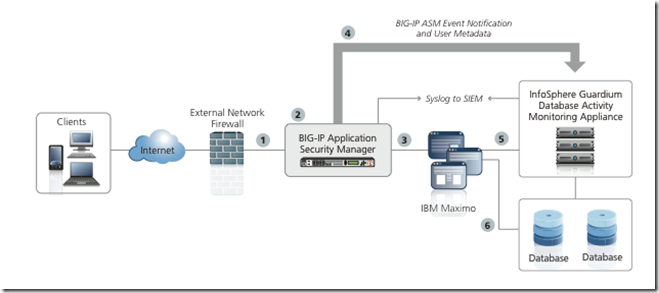

With F5’s presence at RSA and Pulse conferences we are going to be talking a bit about the new integration with Guardium that I blogged about last week. To help out the conversation, I put together a short video explaining the solution. Check out the video and find me at RSA and Pulse to discuss this in more detail. Of course, the deployment guide is available for you here: http://www.f5.com/pdf/deployment-guides/ibm-guardium-asm-dg.pdf and you can bet I’ll be back with a more detailed video showing the discrete implementation steps. Video Table of Contents for the impatient: Minute 0 to 2 – Whiteboard of the architecture Minute 2 to 3:30 - Network diagram and overview Minute 3:30 to 4:30 – Demonstration of the IBM WebSphere Commerce Server Minute 4:30 to 5:00 – Demonstration of the Application Security Manager (ASM) interface Minute 5:00 to 7:00 – Demonstration of the IBM Guardium interface (This demonstrates the linking of user data from ASM with SQL Data from Guardium for user Nojan) Minute 7:00 to 8:00 – Demonstrate of the IBM Guardium interface (This demonstrates the linking of user data from ASM with SQL Data from Guardium for user David)203Views0likes0CommentsF5 and IBM announce ASM and InfoSphere Guardium Database security integration

Another month and another set of solutions from the IBM and F5 partnership, this time, some exciting developments in the security and reporting space with F5 Application Security Manager (ASM) and IBM InfoSphere Guardium. Without further wait, here is the deployment guide, please go through it and send me your feedback. I will just add a few notes about this solution here for the TL;DR crowd. Find the deployment guide on f5.com here: http://www.f5.com/pdf/deployment-guides/ibm-guardium-asm-dg.pdf So, what’s the fuss you may ask? We have created a real-time link between BIG-IP ASM and InfoSphere Guardium which was released with BIG-IP Version 11.3 Hotfix1. BIG-IP sits in front of your environment along with BIG-IP Local Traffic Manager (LTM) (#2 in the diagram here). ASM inspects all the HTTP/S traffic coming into the site. ASM then sends relevant information (as your determine by configuration settings) to Guardium (#4 in the diagram here) so the data can be correlated in real time with the database information that Guardium is also collecting. As a result of this technology, administrators can see in very close to real-time the SQL statements that are being caused by front-end users and those user’s relevant metadata (IP address, etc). This level of introspection and reporting extends your defense in-depth capabilities. A truly powerful combination between F5 and IBM, one which I look forward to talking to you all about at RSA and Pulse in the coming month.228Views0likes0CommentsBIG-IP WAN Optimization Module (WOM) and IBM DB2 Replication

In this post I will share some of the configuration details of accelerating DB2 replication using two popular replication technologies, SQL Replication (and optionally SQL Replication with MQ) and high availability disaster recovery (HADR) Replication. This work started when I had the great pleasure of working with DB2 for the last several quarters. I explored the latest features in DB2 version 9.7 and version 10 and through the IBM Information On Demand Conference I was able to vet and validate my ideas with the great technical resources available at the conference. I would like to thank Martin Schlegel and Mohamed El-Bishbeashy for their great training to validate my independent research. F5 now has solutions for the most common types of DB2 Replication, SQL Replication and HADR, enabling faster replication over longer distances, maximizing bandwidth and allowing to connections with more latency to be used. HADR or SQL Replication? The choice between using HADR or SQL replication is one that will require a lot of investigation on the part of any organization. While HADR is easier to setup and maintain, SQL Replication provides some excellent benefits. In either case, my testing showed that BIG-IP WOM can bring benefits to either solution. Why use WAN Optimization technology? Security * Encrypt: Hardware based encryption secures data transfers if they are not already encrypted (HADR) and offloads CPU intensive tasks from servers if they are already encrypted (SQL Rep). Bandwidth * Compression: Hardware based compression reduces the amount of bandwidth needed and effectively speeds transfers. * Deduplication: Data dedup reduces the amount of bandwidth needed and effectively speeds transfers. Comparison of HADR versus SQL with MQ Replication Below are some of the comparison points between HADR versus SQL Q Replication, you can read more about the differences through various IBM Redbooks found on IBM.com/db2 Feature HADR SQL Q Replication Scope of replication Entire DB2 Database Tables Data propagation method Log shipping Capture/Apply tables Synchronous? Yes No Asynchronous? Yes Yes Automatic client routing to standby? Yes Yes Operating systems Linux, Unix, Windows Linux, Unix, Windows, Z/OS Applications read from the standby? No Yes Applications write to the standby? No Yes SQL DDL replicated? Yes No Hardware supported Hardware, OS, version of DB2 must be identical Hardware, OS, version of Db2 may be different Tools for monitoring? Yes Yes Network compress or encryption? No Yes Partitioned DB support? No Yes Configuration Basics DB2 Configuration for HADR Without sounding too biased, purely from an implementation standpoint I felt that HADR was by far the more simple solution to setup and maintain. Prerequisites: Clock synchronization Trust between hosts Route between hosts DNS or /etc/hosts configuration Identical DB2 instance users and DB2 fences uses and identical UID and GID on both hosts Identical home directories Identical port number and name Automatic instance start must be turned off Configuration: The actual configuration for HADR is to modify the database configuration to set the proper parameters. Just for reference, these parameters include: HADR_LOCAL_HOST HADR_REMOTE_HOST HADR_LOCAL_SVC HADR_REMOTE_SVC HADR_REMOTE_INST HADR_SYNCMODE HADR_PEER_WINDOW HADR_TIMEOUT You can read more about HADR through the Redbook here: http://www.redbooks.ibm.com/abstracts/sg247363.html DB2 Configuration for SQL Q-Replication Configuration for SQL, optionally with MQ, has two parts, first, the MQ setup should be completed if that is utilized. The second part, creation of Capture and Apply tables is universal whether Q-Replication is used or not, only the BIG-IP configuration would change if Q-Replication is utilized. Define WebSphere MQ queue managers Define WebSphere MQ Channels Define WebSphere MQ local and remote queues Configuration of Capture and Apply Tables: Create source and target control tables Enable both databases for replication Create replication queue maps if MQ replication is being utilized Create Q subscriptions if MQ replication is being utilized You can read more about SQL Replication here: http://publib.boulder.ibm.com/infocenter/db2luw/v8/index.jsp?topic=/com.ibm.db2.ii.doc/start/cgpch200.htm BIG-IP Configuration BIG-IP configuration for the WOM module is straightforward whether HADR or SQL Replication is being used. In the case of SQL Replication with MQ, the configuration is different, as compression and encryption should be turned off on MQ first for maximum results. This is a scenario I have not yet tested so for this configuration I am focusing directly on SQL replication and HADR replication. In my tests I enabled both data duplication and compression as I tested with two BIG-IPs over a simulated WAN using a LanForge Virtual Appliance. I looked at bandwidths from 45 Mbps and 100 ms of latency up to 622 Mbps and 20 ms of latency. My dataset was large blobs of text data. In the diagram at the start of the article you can see that this is a symmetric solution requiring BIG-IPs on either end and in each data center. A license for the WOM module is required: BIG-IP Prerequisites: Symmetric deployment requires BIG-IPs in each data center WAN Optimization Module (WOM) license BIG-IP Configuration: After completing the initial configuration of the WOM, the steps require are only to create an optimized application entry for DB2. Your database port, along with the DB2 control port should be optimized. In my case as below, that would be port 50,000 and port 523. I selected memory based dedup and saw the best results in this mode in my tests. For detailed information about basic BIG-IP WOM configuration, see the following chapter of BIG-IP Documentation http://support.f5.com/kb/en-us/products/wan_optimization/manuals/product/wom_config_11_0_0/1.html (Free login account may be required). #next_pages_container { width: 5px; hight: 5px; position: absolute; top: -100px; left: -100px; z-index: 2147483647 !important; } #next_pages_container { width: 5px; hight: 5px; position: absolute; top: -100px; left: -100px; z-index: 2147483647 !important; }390Views0likes1CommentF5 at the IBM Pulse Conference

F5 accelerates and optimizes Maximo deployments. I am pleased to announce that F5 will once again be at the IBM Pulse conference in 2013. Along with Ron Carovano, Peter Silva and other members of the IBM team at F5, we will be manning the booth to answer your questions and discuss your architecture as well as provide demos of our products. The Pulse Conference is happening at the MGM Grand in Las Vegas from March 3rd until the 6th. Please enjoy this video that demonstrates F5’s integration with IBM Maximo and we will see you at the show.239Views0likes0CommentsIBM Netcool Configuration Manager Demonstration

IBM has added support for BIG-IP within their Tivoli Netcool Configuration Manager with the release of Driver Pack 15. In this short video, I show an overview of how Netcool looks and works with BIG-IP LTM. This release opens up an array of opportunities to manage BIG-IP both in the appliance world and the virtual world. Jump to the 2 minute mark of this 7 minute video if you are already familiar with what Netcool does, in order to see the specific parts relating to BIG-IP. As usual, if you have any questions drop me an email or post a comment. #next_pages_container { width: 5px; hight: 5px; position: absolute; top: -100px; left: -100px; z-index: 2147483647 !important; }180Views0likes0Comments